How to avoid AI coding agents to mess up (the code, and your brain)

While using coding agents might help you ship your code faster, it might cost you your next job interview if you're not careful when you use them.

We’ve already discussed on a previous newsletter how the use of LLM’s actually makes us dumber. This is backed by a MIT study, and here’s the link to the discussion in case you missed it.

So in case you’re afraid of AI taking your job, the best antidote is to make sure you’re still learning while you’re using these tools. Be it for writing, coding, or turning the AC on and off in summer.

Today we will be focusing on coding agents, and how we can make the most out of them while still protecting our own human neural networks.

Because here’s the thing: coding agents aren’t a threat to your job. Coding agents used the wrong way are a threat to your job. The ones who get convinced by laziness and just let Claude write their entire codebase without understanding it? Yeah, they’re vulnerable.

The ones who use agents as a thinking partner, someone who executes your vision instead of replacing your vision? Those people are accelerating past everyone else.

The difference isn’t the tool. It’s the discipline.

LLM’s are here to stay

It would be foolish to tell you not to use coding agents, or to pretend they’re not getting better by the minute. The internet seems to be making a consensus around Claude and its coding capabilities. Anthropic focused from day one into making their LLM very, very good at one thing: coding.

And it shows. Claude excels at creating code. The caveat? Well, the less you code, the less you code.

Someone had to state the obvious.

This has mainly two consequences, and they’re not subtle:

The first: The less experience you have as a coder, the worse the results you’ll get from agents. You’ll get code that looks like it works. It compiles. It runs. But it’s fragile in ways you won’t see until production breaks it.

The second: The less you understand your own codebase, the less likely you’ll pass a live-coding assessment or actually convince someone in a job interview that you built what you built. Because you didn’t. Your agent did. And when they ask you why you chose SQL over NoSQL, or why your database schema looks like that, you’ll freeze.

The code might seem to work at first. But any experienced programmer knows that simply vibe-coding your way to a product without proper testing, proper architecture, and proper understanding will only increase technical debt. The second you have to change things (and you will have to change things) chances are that most of your code (if not all of it) becomes useless.

It’s like building a house on sand and wondering why the walls crack.

Now, if you have some coding experience and you actually know what you’re doing? The results are wild. You point the agent in the right direction. You understand what it generated. You review it. You test it. You commit it. And suddenly you’re shipping 10x faster while actually getting smarter about the problem space. No wonder Anthropic’s CEO said CLAUDE would end up writing “90% of the code within 3-6 months” in early 2025.

While that didn’t happen, it is true that more and more of the code is being written by agents (this is a good summary). We can be sure that with the right operator, they’re a force multiplier.

The real trick is simple but not easy. It’s composed of two things:

1. Guidance. Not just knowing what you want, but knowing why you want it. Knowing which tools go together. SQL or NoSQL? Which database engine? How do these systems talk to each other? How do we lay a foundation where everything works synergistically? This is what only experienced engineers know. You give the agent a blueprint, not a wishlist.

2. Testing. Actually verifying that what the agent built does what you asked it to do. And not just “does it run”? but does it work under load? with bad data? in edge cases?. Testing is where the fake code gets exposed.

And as little bonus think of git as your best friend. I find myself making comits a lot more often than before, because they serve as a checkpoint in case I get a little to excited about the vibe-coding results of a well guided agent.

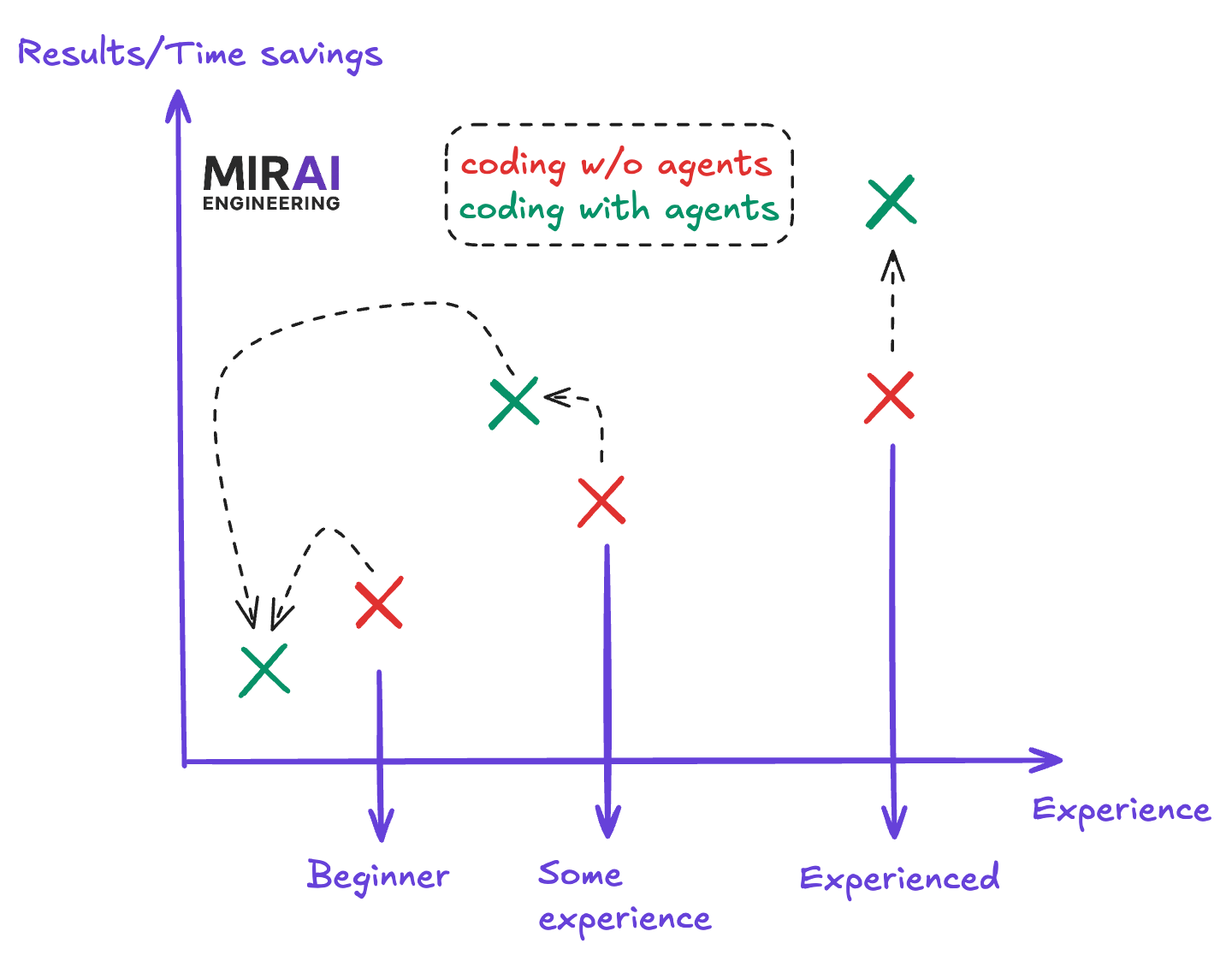

This image isn’t backed by any research, but like we say in Chile “I have no proofs, but I also have no doubts”.

See the pattern? Beginners using agents without guidance get worse results than beginners coding from scratch. They're vibe-coding. But experienced engineers using agents with clear direction? The time savings skyrocket. Because they're not replacing their thinking, they're amplifying it.

Now let’s give some useful advice

Think of an agent as an intern who has:

10x your memory capacity (can hold entire codebases in context)

10,000x your research capabilities (basically Google integrated into your IDE)

0x your judgment

The good news? You have 10x the agent’s experience to create a roadmap and actually ship something professional.

The trick is forcing yourself to think first.

Here’s where most people mess up: they ask the agent to build something without knowing what they actually want. Then they’re surprised when the agent goes in circles or builds something that doesn’t fit. The agent isn’t the problem. The lack of clarity is.

So write it down. Not the code. The requirements. You, not the agent.

What are you building? Why? What’s the data flow? What breaks if you get it wrong? What are the non-negotiables? What can you compromise on? This is the thinking work. This is the stuff that actually protects your brain and your next job interview.

Because here’s the secret: forcing yourself to plan everything out before you touch code makes you exponentially better at building systems. The agent does better work because you’ve thought it through. You understand every decision. You can defend every choice. And when the agent makes a mistake, you catch it because you know what the right answer should be.

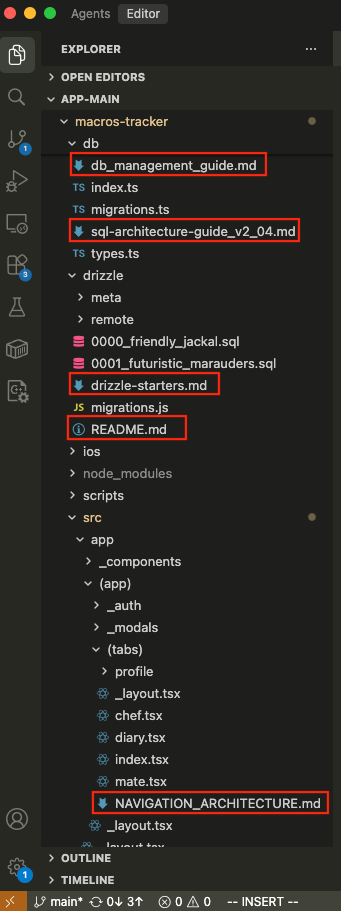

Look at the repo I’m currently working on. It has almost more markdown files than actual code. In the image you can see db_management_guide.md, sql-architecture-guide.md, NAVIGATION_ARCHITECTURE.md. README.md. There are many more of those throughout the repo, and every single one started as a total brainstorm-guided thing of what I need and wanted.

The art of actually thinking before acting is something that can be forgotten quite easily (I do it all the time).

And I also have further down the game-changer: a

CLAUDE.md,a file literally containing instructions for the agent on how to work in my codebase.

This looks excessive until you realize: these files aren’t for anyone else. They’re for me and the agent. When I hand off a task, the agent has a blueprint. When I come back three sprints later, I remember exactly why I made each architectural decision. And in a job interview? I can actually explain my code because I documented it. Because I thought before I coded.

The compounding effect is brutal:

Clearer thinking → better requirements → better agent work → better code → better job prospects

Not because you used an agent. But because you forced yourself to think like someone who actually knows what they’re doing.

And it’s actually quite useful for other people working in the repo as well, because reading a documentation before getting hands-on is actually quite helpful.

The real play

Coding agents aren’t lazy mode. They’re leverage mode. But leverage only works if you know what you’re directing.

The best engineers I know aren’t replacing their thinking with agents. They’re replacing their typing. They’re writing docs, thinking through architecture, and letting the agent handle the boilerplate and the repetitive work.

So next time you’re about to fire up Claude to “just build this feature,” stop. Spend 30 minutes writing down what you want. Make it concrete. Make it specific. Make it defensive. Think about what can go wrong.

Then let the agent execute.

Your brain will thank you. And more importantly, your next interviewer will too.

Keep building.